Talking to Machines

Written by Gloria Wang

In the past decade, the quest for artificial intelligence has taken off at a pace faster than anyone expected. From the deep learning breakthrough in 2012 to beating the Go world champion in 2016, artificial intelligence (AI) has improved by leaps and bounds. In recent years, it seems like this breakneck pace is only further accelerating. But accelerating in what way? AI is a broad topic, covering areas like machine learning, robotics, and even computer vision. Models are constantly being created, improved, and outdated. Work has been done in all areas, making it difficult to determine what the “next big thing” will be. But with recent advances in models such as RAG, we might have an answer. As a Harvard Business Review article writes, the “next big breakthrough in AI will be around language” (Wilson 2020).

Natural Language Processing (NLP) is a branch of artificial intelligence that helps computers understand, interpret, and process human language. It is the technology that enables us to use our voice to ask questions to Siri, Google Assistant, or Alexa. In order for machines to answer questions, they must have the necessary information, thus requiring the model to be pre-trained. Changing what a pre-trained model knows is difficult. It requires retraining the entire model with new information.

But now, a team at Facebook has developed a technology that can swap out given information with new ones without having to retrain the entire model. Named Retrieval-Augmented Generation (RAG), the model was open-sourced just last month as a component of the Hugging Face transformer library. In a 2020 paper published in the open-access repository arXiv, Lewis et al. presented their new architecture (Lewis 2020).

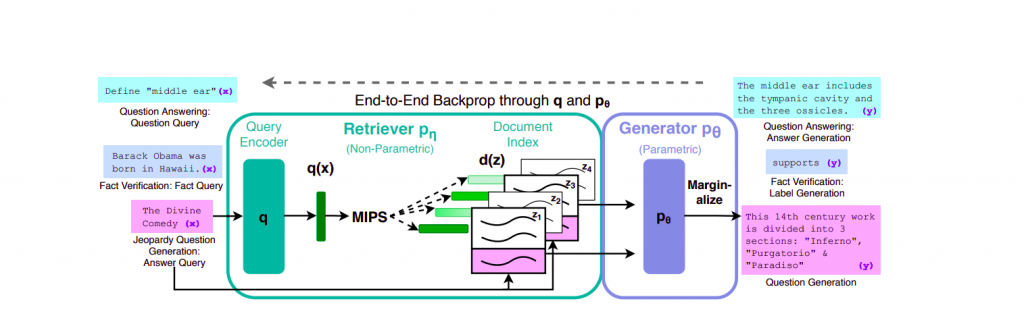

RAG combines an Information Retrieval (IR) component with a sequence-to-sequence, abbreviated seq2seq, generator, producing a model who’s internal knowledge can be easily altered in an efficient manner, but also achieve state-of-the-art results. RAG acts like a standard seq2seq model in that a sequence is inputted into the model, an encoder captures the context of the input sequence in the form of a hidden state vector and sends it to the decoder, which then produces the output sequence. The difference is that instead of passing the input directly into the generator, RAG uses the input to retrieve relevant documents from Wikipedia. Facebook explains how when asked “When did the first mammal appear on Earth?,” RAG may look for documents with keywords of “Mammal,” “History of Earth,” and “Evolution of Mammals,” which are then used as context with the original input to be fed into the seq2seq model.

An overview of RAG that shows how the Information Retriever passes related documents to the pre-trained encoder-decoder (Generator).

Due to RAG’s ability to synthesize a response using separate pieces of information drawn from a variety of sources, it can generate more specific, diverse, and factual text than other state-of-the-art seq2seq models. Its true strength lies not in its superior text generation, but in its flexibility. The knowledge that RAG possesses can be easily changed by swapping out documents used for information retrieval, allowing it to quickly adapt to new information.

The world is changing. Minute by minute, facts, or rather our understanding of facts, evolve. In order to keep up with the rapidly changing world of today, it is necessary for AIs to not only have access to vast quantities of information, but also updated, relevant information. This is where RAG truly shines, paving the way for newer models, newer research, and newer breakthroughs.

References

Lewis, P., et al. (2020). Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. arXiv. arXiv:2005.11401. Retrieved 28 October 2020, from https://arxiv.org/pdf/2005.11401.pdf

Retrieval Augmented Generation: Streamlining the creation of intelligent natural language processing models. (2020). Facebook. Retrieved 28 October 2020, from https://ai.facebook.com/blog/retrieval-augmented-generation-streamlining-the-creation-of-intelligent-natural-language-processing-models/

Wilson, H., Daugherty, R. (2020). The Next Big Breakthrough in AI Will Be Around Language. Harvard Business Review. Retrieved 30 October 2020, from https://hbr.org/2020/09/the-next-big-breakthrough-in-ai-will-be-around-language